Earlier experiments showed how an RL agent can learn basic strategy, adapt to real casino rules, and rediscover card counting...

The toy blackjack of Notebook 1 was a clean learning environment: a single deck, only Hit or Stand, and reshuffling every hand. But real casino blackjack is more complex. Players face multiple decks, expanded actions like Double Down, and payout rules that tilt the game slightly further in the house’s favor.

This second experiment asks whether an RL agent can still learn realistic play when the rules reflect what happens at a casino table.

The environment now includes:

# Action space: 0 = Hit, 1 = Stand, 2 = Double

action = env.action_space.sample()The state and action space is much larger now. Monte Carlo control, which waits until the end of each episode to update values, becomes inefficient. Instead, Q-learning is used.

At each step, the Q-value update is:

target = reward + gamma * np.max(Q[next_state])

Q[state][action] += alpha * (target - Q[state][action])This incremental update lets the agent converge on useful strategies more quickly, even in a complex environment.

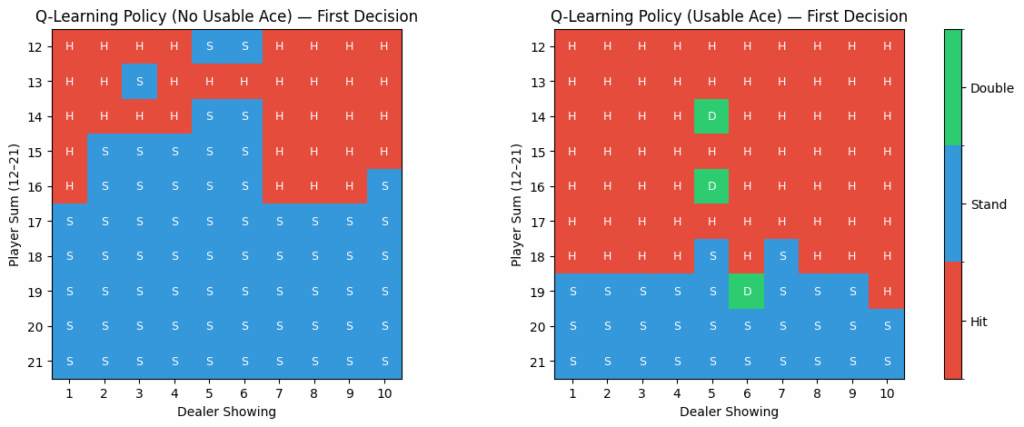

The policy is summarized in grids, broken down by whether the player has a usable ace.

After training on hundreds of thousands of simulated hands, the agent’s estimated expected value (EV) converges around -4% per initial bet.

These results are in line with the theoretical house edge of ~0.5%. The difference comes from approximations and the limitations of tabular Q-learning, but the broad shape of play remains realistic.

Earlier experiments showed how an RL agent can learn basic strategy, adapt to real casino rules, and rediscover card counting...

Basic strategy alone cannot beat the house edge in blackjack. The only way to shift the odds is through betting...

From Zero to Basic Strategy: RL Learns Blackjack Can a machine, starting with no knowledge of blackjack, discover the same...

All rights are reserved.